The Thinking Shift Behind Successful AI Agent Investments

- Jan 24

- 6 min read

AI agents are no longer speculative technologies relegated to R&D labs or sci-fi headlines. They’ve crossed the threshold into strategic corporate infrastructure. As enterprises enter 2026, the era of agentic systems reshaping workflows, decisions, and outcomes is no longer future talk. Yet, a surprising number of product teams still pitch agents as cool capabilities instead of C-suite investments with tangible financial impact.

Let’s be clear: CEOs aren’t interested in features. They fund revenue velocity, operating leverage, and margin expansion. And in 2026, boardrooms are tightening scrutiny on AI ROI in a way we haven’t seen before. Investors are demanding measurable returns, regulatory clarity, and defensible value, pushing AI investment decisions beyond hype and into demonstrable economics.

Meanwhile, in the UAE, a global AI leader with some of the highest adoption rates in the world, organizations aren’t just experimenting with AI, they’re operationalizing it at scale. A recent global adoption report positions the UAE at the top of AI diffusion rankings, supported by multi-billion-dollar infrastructure investments from major tech partners.

In this context, product leaders must pitch AI agents in the language of revenue, costs, and financial timelines, not speculative productivity gains. Below are five strategic ways to shape AI agent investment cases that secure funding and move pilots into production.

What It Actually Takes to Budget for an AI Agent

Before any credible investment discussion can happen, cost modelling has to reflect reality.

AI agents are not a software add-on. They are full-stack systems that combine infrastructure, cognitive design, and ongoing human oversight. Treating them otherwise is where most early failures begin.

Effective budgeting is typically done in partnership with an AI programme manager who understands the end-to-end cost structure, including:

Data sovereignty and compliance requirements

Subscription and licensing costs

LLM consumption across environments

Compute, vector, and transient storage

Design, build, and retraining resources

When these elements are not surfaced early, organisations encounter predictable issues: token overruns, latency constraints, brittle behaviour, and rising run-costs that undermine confidence long before value is realised. From a product ownership perspective, these costs are part of the design and build equation. They reflect the human architecture required to make an agent reliable, trusted, and aligned with business outcomes.

At a minimum, this typically includes:

Information Architecture to structure memory, prompts, escalation logic, and knowledge ingestion

Product Management to own the agent lifecycle, translate SME expertise into repeatable systems, and manage delivery from design through deployment

When leaders bring forward a financial model tied to real roles, run-costs, and outcome metrics, approval becomes about confidence rather than persuasion.

1. Replace ROI Theatre With Full-Funnel Revenue Logic

Claims like “this will save time” or “this will automate work” are rarely decisive at executive level. They are directionally true, but commercially vague. Senior leaders are focused on financial velocity: how quickly decisions turn into revenue and how consistently margins scale. AI agents need to be positioned in that context. A practical starting point is to map the commercial funnel and identify where expertise, latency, or inconsistency currently slows progress.

For example, in a sales environment:

pre-sale discovery

pricing and approval cycles

proposal generation

onboarding and post-sale support

Agents that are embedded at these points can retrieve precedent decisions, surface playbooks, and generate compliant responses in real time. In practice, well-designed sales enablement agents have reduced deal cycle times by 25–40%, directly improving pipeline conversion and revenue predictability.

What resonates with executives is not what the agent is, but what it does to compress timelines and unlock capacity. A simple before-and-after funnel view often makes that impact immediately clear.

2. Treat the Agent as an Operational Asset

AI agents are not features. They operate continuously, influence decisions, and require governance. The more accurate framing is to treat an agent as a productive asset; effectively a micro-business inside the organisation. This requires two complementary views:

Cost modelling: Understanding total cost of ownership across a 12–36 month horizon: model usage, retraining, storage, engineering time, and governance overhead.

Outcome modelling: Quantifying what the agent returns: SME time released, decision throughput increased, revenue capacity expanded.

For a sales enablement agent, leadership teams tend to ask: "Are deals closing faster?", "Are compliance checks more consistent?", "Is onboarding time materially reduced?" When framed this way, the agent can be assessed alongside other capital investments as a governed asset with a return profile, rather than an experimental tool.

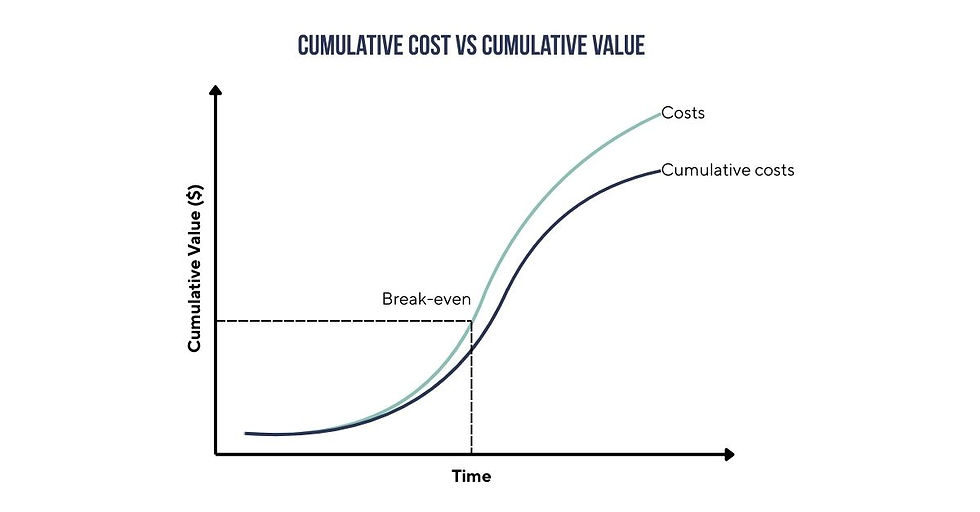

3. Visualize Break-Even and ROI Timelines

One reason many AI proposals fail is not because the tech lacks merit; it’s that executives can’t see when they get their money back. Use financial modeling like NPV and IRR over a 24–36 month horizon. A credible example would be:

Total investment of $180,000 over two years

Annual SME time offload of 3,600 hours

Break-even achieved by month 10

Three-year IRR of 70%+

Presented visually, this allows AI agents to be evaluated against other digital or operational investments on equal footing.

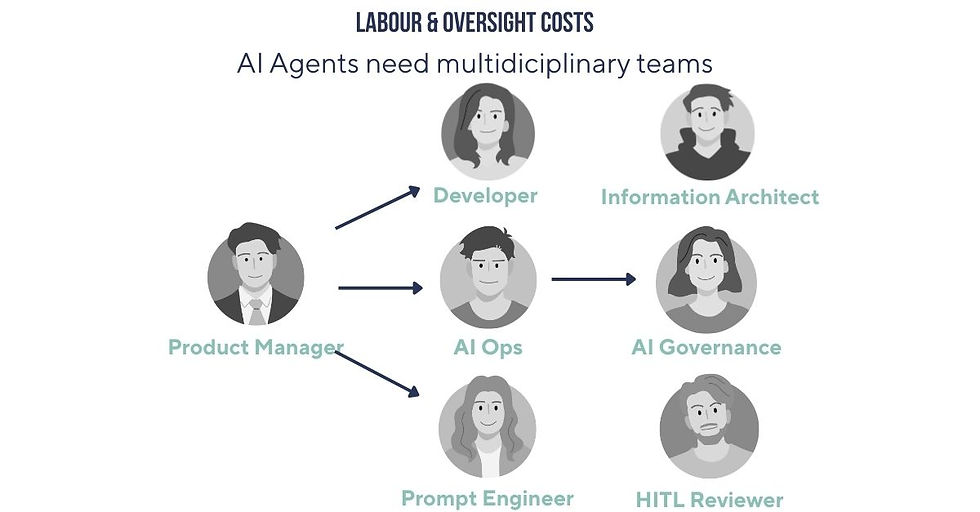

4. Labour, Oversight, and the Myth of Autonomous Systems

One of the quieter misconceptions around AI agents is that autonomy reduces organisational complexity. However as agents take on more consequential roles e.g. advising, deciding, and acting, the requirement for human oversight becomes more structured. The question is no longer whether people remain in the loop, but where they sit and what authority they hold.

Every production-grade agent is supported by a human system that spans design, supervision, and accountability. This is not a transitional phase; it is the operating model. Behind a reliable agent sits a multidisciplinary structure:

engineers responsible for stability and performance,

cognitive and prompt designers shaping reasoning behaviour,

information architects governing memory and knowledge flow,

operations teams monitoring drift and failure,

and domain experts who intervene when judgement matters.

This labour layer is often described as overhead. In reality, it is the mechanism through which trust is earned and sustained. Organisations that fail to budget for this early tend to discover it later and usually after confidence has been lost. Those that acknowledge it upfront signal maturity: an understanding that intelligent systems, like human ones, require stewardship.

5. Explainability as an Investment Requirement

At senior levels, enthusiasm for AI usually collapses because of opacity, rather than accuracy. They are seeking defensible decisions and credible logic. Systems whose behaviour can be understood, challenged, and improved.

Explainability has therefore shifted from a technical consideration to an economic one. An agent that cannot articulate how it arrived at an outcome introduces unquantifiable risk, regardless of performance metrics.

Investable agentic systems are designed to surface:

the information and memories they relied upon,

the reasoning path taken,

the degree of confidence in the outcome,

and the conditions under which human escalation is required.

This creates institutional confidence. Leaders can stand behind decisions, auditors can trace logic, and organisations can learn systematically from error rather than obscure it. In an environment where accountability matters as much as speed, explainability is an essential safeguard and what makes scale possible.

A Practical Checklist Before Seeking Funding

To align AI agent proposals with executive expectations:

✅Quantify revenue impact, not just efficiency

✅Model full costs, including governance

✅Show break-even and long-term returns

✅Be explicit about the operating team

✅Anchor trust in explainability and memory

A Final Thought

AI agents are increasingly woven into how organisations operationalise decisions and create value. In fact, major enterprise platforms are now explicitly positioning agentic systems as part of the core operating model. For example, Salesforce’s Spring ‘26 release emphasises coordinated AI across selling, service, and commerce to drive scalable outcome delivery.

At the same time, fresh industry data shows that while most organisations have tested AI agents, only a small fraction have moved them into mission-critical roles, precisely because trust, transparency, and governance are still the gating factors for strategic adoption.

The leaders who win in 2026 won’t be those with the most agents. They will be the ones who can fund, govern, and defend them with clarity, speaking in the language of revenue, risk, and durable advantage. That is where strategic leverage now resides.

This article is brought to you by, Tim Daines, Programme Director at The Cambridge Labs. A capacity building lab to help leadership teams move AI agents from promising pilots to defensible, board-ready investments.

Comments